Shifting from Assumption-Based Readiness to Evidence-Backed Defense

Organizations frequently self-assess their preparedness at high levels, yet empirical data reveals that practical decision accuracy during incidents is alarmingly low. This discrepancy exists because traditional security testing is heavily siloed.

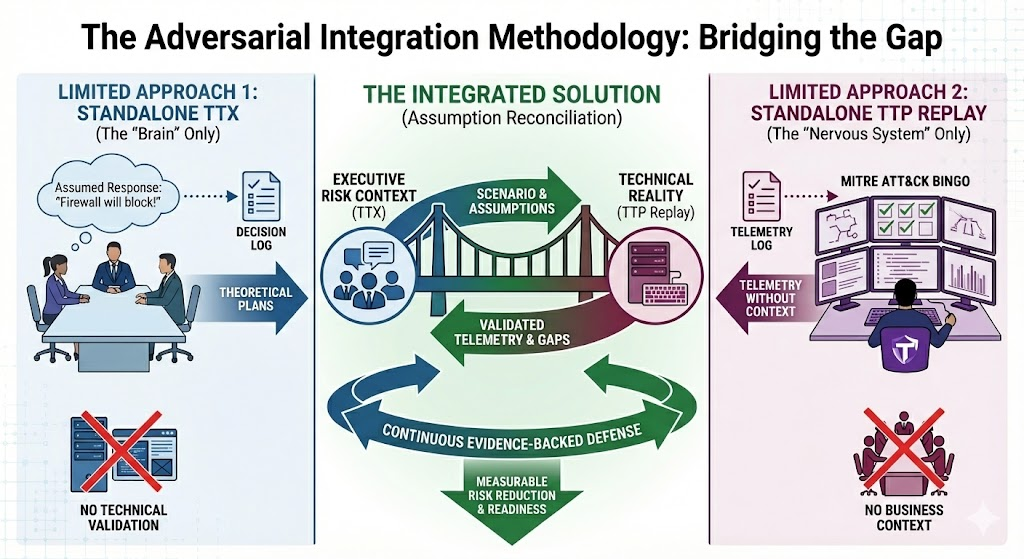

A Tabletop Exercise (TTX) functions as the organizational "brain." It evaluates human coordination, process maturity, and decision-making under pressure. However, a TTX relies entirely on assumed technical realities, like assuming an Endpoint Detection and Response (EDR) tool will isolate a compromised host. Conversely, a Tactics, Techniques, and Procedures (TTP) Replay, often called Purple Teaming, acts as the "nervous system." It safely executes actual adversarial behaviors within the production environment to measure exactly what the technical stack detects and prevents.

Running a TTX without a TTP Replay creates theoretical plans based on unverified technical assumptions. Running a TTP Replay without a TTX generates raw technical telemetry devoid of executive business context or regulatory escalation pathways. Integrating the two methodologies transforms tabletop discussions into measurable operational truth, shifting the organization from compliance-driven assumptions to a continuous, evidence-backed defense model.

Executive FAQs

A: A TTX is a discussion-based simulation designed to stress-test the human and process layers of incident response. It exposes communication breakdowns, escalation failures, and decision bottlenecks. A TTP Replay is a live, hands-on-keyboard technical assessment where engineers safely execute real adversarial behaviors in the production environment to see if the security tools actually detect them.

A: Red teaming is designed to find a single path of least resistance to achieve an objective, which tests the organization's ability to stop a specific, targeted breach. It does not validate the broader detection engineering architecture. Integrated TTX and TTP Replay systematically validates whether the specific, high-priority threats discussed by leadership in a TTX are actually detectable by the security stack, providing broader and more measurable risk reduction.

A: Organizations spend millions on advanced security tools based on vendor claims of absolute protection. TTP Replay holds vendors accountable by mathematically proving their control effectiveness. If a tool fails to detect the specific behaviors emulated during the replay, leadership gains the empirical data needed to demand vendor remediation, tune the tool internally, or reallocate the budget to more effective controls.

A: Assumption-based readiness leads to false confidence, resulting in extended adversarial dwell times when a real attack occurs. Undetected dwell time exponentially increases the financial impact of a breach through prolonged operational downtime, higher incident response costs, severe brand degradation, and the triggering of massive regulatory fines.

Security Leadership FAQs

A: Without live-fire technical validation, the answer is unknown. Security teams frequently suffer from authority bias, trusting that a default tool configuration will catch advanced tradecraft. TTP Replay turns the assumption "we think we would catch that" into verifiable proof by mapping raw telemetry to the exact scenario discussed in the TTX

A: Control coverage attempts to build detections for as many techniques in the MITRE ATT&CK framework as possible. This "MITRE ATT&CK bingo" approach wastes engineering resources on irrelevant threats and creates brittle alerts. Control effectiveness focuses on validating that your defenses actually work against the specific, high-probability threats that target your business

A: Security leadership must track actionable metrics during a TTP Replay.

- Mean Time to Detect (MTTD): The exact time it takes the security stack to generate an actionable alert.

- Mean Time to Respond (MTTR): The time required for a human analyst or automated playbook to contain the threat.

- Alert Fidelity Rate (Signal-to-Noise Ratio): The percentage of alerts that accurately identify the emulated threat versus background noise.

- False Negative Rate: The frequency at which emulated behaviors bypass the security stack entirely without generating telemetry.

A: Instead of guessing which rules to write, detection engineers use the visibility gaps exposed during the TTP Replay to prioritize their work. If the replay reveals that Living-off-the-Land (LotL) binaries are bypassing the EDR, engineering resources are immediately directed to build SIEM correlation rules specifically for that blind spot.

Board and Audit Committee FAQs

A: Boards of Directors hold fiduciary responsibility for cyber risk oversight. Reviewing theoretical incident response plans is no longer legally defensible. Integrated testing provides boards with objective, quantitative data proving that the organization's security controls function as intended and that executive leadership can coordinate a rapid, compliant response.

A: The Securities and Exchange Commission (SEC) requires public companies to disclose material cybersecurity incidents within four business days. Meeting this timeline requires flawless technical detection speed combined with rapid executive materiality assessments. The TTP Replay validates the technical detection speed, while the TTX validates the cross-functional communication pathways required to make the legal materiality decision.

A: Instead of guessing which rules to write, detection engineers use the visibility gaps exposed during the TTP Replay to prioritize their work. If the replay reveals that Living-off-the-Land (LotL) binaries are bypassing the EDR, engineering resources are immediately directed to build SIEM correlation rules specifically for that blind spot.

Implementation and Operational FAQs

A: Modern adversarial emulation uses vetted, safe execution frameworks. The purple team executes actions strictly scoped to emulate the behavior without introducing destructive elements. For example, the team will execute a harmless payload that generates the exact same telemetry as ransomware, allowing the SOC to validate detections without putting actual business data at risk.

A: The integration follows a continuous feedback loop.

- Run a TTX and rigorously document all technical assumptions made by the participants.

- Translate those assumptions into an adversarial playbook.

- Execute the TTP Replay in production.

- Map the resulting telemetry back to the TTX assumptions to identify gaps.

- Apply engineering fixes and retest the specific failures to validate risk reduction.

A: Retesting should align with the principles of Continuous Threat and Exposure Management (CTEM). While full-scale TTX engagements may occur annually or bi-annually, the technical retesting of specific, remediated playbooks should occur continuously as detection engineers push updates to the SIEM or EDR platforms.

The 5-Level Evidence-Backed Defense Maturity Model

- Characteristics: Security is viewed purely as an audit function. Exercises are designed to pass compliance checks rather than uncover flaws.

- Risk Exposure: Critical. The organization possesses a high degree of false confidence.

- Characteristics: Tabletops are conducted regularly, but participants assume technical controls will work perfectly. No technical validation occurs.

- Risk Exposure: High. Plans exist on paper but will likely fail during a live incident.

- Characteristics: Red teaming and penetration testing occur, but the results are kept in technical silos and rarely inform executive risk discussions or TTX scenarios.

- Risk Exposure: Moderate. Technical visibility is improving, but executive coordination remains disjointed.

- Characteristics: Technical assumptions made during the TTX are deliberately captured, translated into playbooks, and validated through live TTP Replay.

- Risk Exposure: Low. The organization actively quantifies and manages the discrepancies between theoretical plans and operational reality.

- Characteristics: Aligns fully with CTEM frameworks. Adversary emulation continuously validates detection engineering, and the board receives dynamic risk reports based on mathematical control effectiveness.

- Risk Exposure: Minimal. The security posture is highly adaptable and audit-defensible.

References and Supporting Documentation

- Immersive Labs. (2025). 2025 Cyber Workforce Benchmark Report. This report details the global readiness illusion, noting that while 94% of organizations report high confidence, decision accuracy sits at 22%.

- Securities and Exchange Commission (SEC). (2023). Cybersecurity Risk Management, Strategy, Governance, and Incident Disclosure. Rules mandating the 4-day 8-K disclosure for material incidents.

- National Institute of Standards and Technology (NIST). (2024). Cybersecurity Framework (CSF) 2.0. Guidance on governance, detection, and continuous improvement.

- MITRE Corporation. MITRE ATT&CK Framework. The global knowledge base of adversary tactics and techniques based on real-world observations.

- Gartner. (2022). Implement a Continuous Threat Exposure Management (CTEM) Program. Strategic framework for scoping, discovering, prioritizing, and validating security exposures.

Empowering Organizations to Maximize Their Security Potential.

Lares is a security consulting firm that helps companies secure electronic, physical, intellectual, and financial assets through a unique blend of assessment, testing, and coaching since 2008.

16+ Years

In business

600+

Customers worldwide

4,500+

Engagements